“No man ever steps in the same river twice, for it is not the same river and he is not the same man.”

–Heraclitus, 5th century BCE

Suppose there is some online information about you that you’d prefer to keep private. It could be something you worry others would find unprofessional, something you consider embarrassing, or something that is inaccurate. For any reason, you want that information not to show up when people look up your name. Privacy law recognizes your right to have that information erased. In fact, one of the core principles of modern privacy law is control over personal information, which includes the right to erasure, also known as the right to be forgotten. It is an intriguing privacy right that has developed over the past two decades and is now at risk of becoming obsolete, leaving us prisoners of our own pasts.

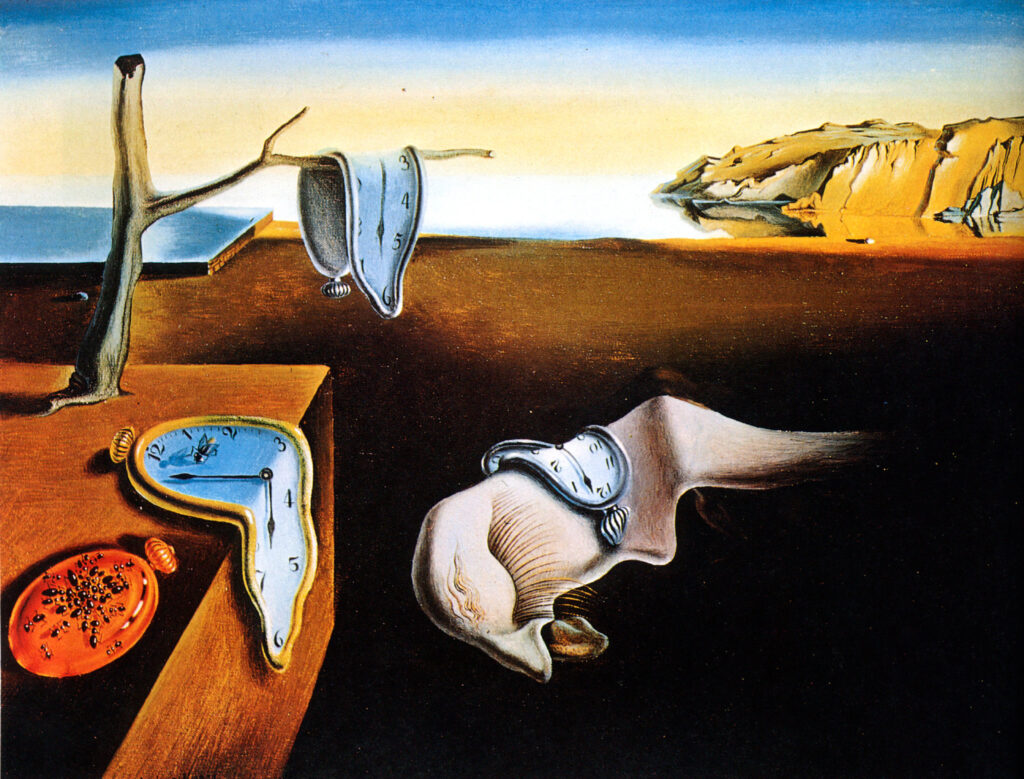

Humans are not static entities. We evolve, and our environment changes. For most of us who are not public figures, minor mistakes and indiscretions are supposed to naturally fade from the collective memory over time, allowing us to define who we are today without being limited by who we were in the past. The Information Revolution, with its endless data collection and digital archives, has flipped the default from forgetting to remembering. Human society, through technology, now remembers everything. Information about our lives, our behavior, and our personality is collected, analyzed, and stored, usually perpetually, sometimes inaccurately, oftentimes out of context.

The right to be forgotten is meant to help us regain control over our personal information. It is a privacy right linked to personal autonomy and human dignity. Our digital personas are an extension of our physical selves. If outdated or irrelevant past data creates a distorted image of us, our autonomy and dignity are damaged, as we lose control over how we are perceived by others.

In 2010, a Spanish citizen, Mario Costeja González, filed a complaint with the Spanish data protection agency against Google Spain, requesting that Google remove his personal data from its search results. González was particularly concerned about online information pertaining to foreclosure proceedings on his home. The agency sided with González, and Google appealed to the Spanish courts, which referred the case to the Court of Justice of the European Union (CJEU). The subsequent 2014 ruling of the CJEU, based on a 1995 EU directive, established the right to be forgotten, which, up until that point, had mainly been theoretical, as a practical human right.

In 2018, the European General Data Protection Regulation (GDPR), probably the most comprehensive and influential data privacy legislation in history, came into effect. The GDPR adopted the right to be forgotten, renaming it the right to erasure. A similar provision was also included in the California Consumer Privacy Act (CCPA) of 2018. Both laws, especially the CCPA, include exceptions and limits on the right to be forgotten, but the general principle remains: users have a legal right to request the erasure of personal information, and whoever collected and holds personal user information has a duty to comply with such a request, erase the information, and tell their service providers to do the same.

So, if you’re unhappy with what appears when people search your name, you can simply ask Google to remove the information, and that’s it—problem solved. Well, not really. A rapidly growing part of the population is now using AI to search for information. ChatGPT, the largest AI platform, now has close to 1 billion active users. Many still use Google to search, but when they do, the first thing Google gives them is AI Overviews, which are integrated AI summaries on top of the regular search results, powered by Google’s Gemini. And, as it turns out, getting an AI model to forget information is not easy.

In a traditional database, information is stored in separate, addressable units. Removing information simply involves deleting data from a specific “place” within the database structure. It’s a precise action that does not affect any other data stored in the database. The core function of an AI platform, however, does not rely on stored information. During its training, the program processes huge amounts of information to create an abstract mathematical model. Once the training is over, the original information is gone, transformed into mathematical parameters, or weights, that allow the model to predict and produce its output. A single weight doesn’t represent a single fact, but the influence of many different data points. Likewise, a single fact in the training data can influence many weights. The processed information becomes an integral part of the model and cannot be removed without significantly damaging it, much like in the human brain. Even if there were a way to edit out specific training data after training is complete, it would not be effective, as the model could usually infer that information from other data.

While you cannot make an AI model forget, you can control what it says. AI platforms use post-processing rules, which are filters that aim to prevent the expression of certain facts or types of information. The problem with these filters is that the information still exists; it is just hidden. Filters can be circumvented; an AI model, like a person, can be tricked or coerced into revealing hidden information. Additionally, there is a risk that the hidden information may be revealed in the future through an update that breaks the filter, a leak, data reuse, or other means.

The right to be forgotten can still be exercised in the traditional way. You can ask Google and other search engines to stop pointing to the information, and you can ask social media platforms and websites to delete it. If the data is unavailable at the source, AI models cannot use it for training. The problem is that AI models use huge, overlapping training datasets. If the information exists somewhere, it may be included in AI training data. Additionally, an AI model can learn information even if it appears online in a different context or form, and it can also infer it by aggregating other data points. The AI model does not really need to find the actual information; it can calculate and predict it, which is what it is designed to do.

The right to be forgotten is a new right, as legal rights go, and it is already becoming obsolete. Without it, we have no effective control over the image we present to the world. If that image is distorted by inaccurate, irrelevant, or outdated information, there is already little we can do. AI technology is used everywhere, and we may never know why our application was rejected or why someone isn’t answering our emails. Technology designed to advance humanity as a whole is now restricting our freedom to grow as individuals, imprisoning us in our own pasts.

306 · 3 · Mar. 15, 2026 · AI, Privacy

continue to this week’s featured note:

-

Project Panama and Respect for Culture

An AI model is a statistical representation of human knowledge. Building such a model on disrespect for books and authors is distasteful.

Leave a Response