There is a large and vibrant community of book lovers on social media. I see people exchanging book recommendations, publishing detailed reviews, organizing book clubs, reflecting on classic literature, and proudly sharing pictures of their prized collections, stocked bookshelves, and rare first editions.

Some members of the online book lovers community are authors themselves or are aspiring to publish their first novel. Writing a book is often described in literature as a long, demanding, deeply personal journey that blends passion with discipline. George Orwell wrote this in his 1946 essay Why I Write:

“Writing a book is a horrible, exhausting struggle, like a long bout of some painful illness. One would never undertake such a thing if one were not driven on by some demon whom one can neither resist or understand. ”

Now, imagine what an author would feel when they discover that a printed copy of their book was bought by a technology company, along with millions of other books acquired in bulk from wholesalers, with no regard for its actual substance or content. The book was first run through a hydraulic cutting machine, which cut off its spine. Then the pages were cut again to fit an industrial high-speed scanner, which scanned them to produce a digital image. Finally, the remains of the sliced book were disposed of. The process is called “destructive scanning”:

“Anthropic “destructively scan[ned]” the print copies to create the digital ones. Anthropic or its vendors stripped the bindings from the print books, cut the pages to workable dimensions, and scanned those pages — discarding each print copy while creating a digital one in its place.”

This was done without the authors’ permission or knowledge and without any regard for copyright laws. Moreover, it is an especially disrespectful act by Anthropic, a company that claims to pioneer AI safety, morality, and the public good, as they recently asserted in their “Constitution”, which is essentially a code of ethics.

Let us go back for a minute. As reported in the Washington Post, based on recently released court filings, Anthropic began in 2024 what they called Project Panama, a secret operation to create a permanent research library for training its current and future AI models. Project Panama, which sounds like something out of a B movie, involved two morally and legally questionable strategies.

First, Anthropic spent millions of dollars buying physical books in bulk from used-book retailers and book wholesalers, “destructively scanning” them in the manner I described. Second, they downloaded millions of digital books from online pirated book libraries such as LibGen and added them to their AI training datasets.

In 2025, Anthropic settled a class-action copyright lawsuit, regarding Project Panama, filed by authors, for $1.5 billion. However, the issue here is not just legal. In Claude’s Constitution, Anthropic discusses in detail its aspirations to create an AI model that is ethical, moral, and inherently good. Training such a model on data acquired through ethically, morally, or perhaps legally objectionable means creates a philosophical paradox. Moreover, an AI model is a statistical representation of human knowledge. At its most abstract level, it is an indexed library. Building such a model on disrespect for books and authors is distasteful. It is also unwise, more on that presently.

According to the Washington Post, Project Panama was run at Anthropic by Tom Turvey, a Google executive who helped create Google Books. More than 20 years ago, Google partnered with academic and public libraries to scan books and make them publicly available online. The physical books were not damaged, and one of the project’s stated goals was to preserve old and rare books. However, Google did not seek the authors’ permission, and copyright lawsuits were filed and litigated for years. Google’s actions were eventually ruled fair use—legal in the formal copyright sense. However, scanning books and putting them online without the authors’ knowledge is at the very least disrespectful.

In the AI industry, Anthropic was hardly the only company to follow Google Books’ example. According to a lawsuit filed against Meta, the company also used data downloaded from online pirated book libraries such as Z-Library, Anna’s Archive, and LibGen to train its AI models. Internal emails revealed in court filings show that Meta employees questioned these actions, calling them “legally not OK” and saying they didn’t feel right. I have to agree.

As for OpenAI and Microsoft, they each face multiple copyright lawsuits from authors who question how their works ended up in their AI training data. In light of the $1.5 billion Anthropic settlement and what appears to be the prevailing industry practice regarding copyrighted works, more such lawsuits are likely to follow.

It is easy to frame the use of books without the author’s permission to train AI models as a copyright vs. progress issue. If AI companies were required to license every piece of data they use, development would stall, and costs would skyrocket. Fair use provisions were enacted in part to keep copyright law from becoming a barrier to creativity and progress. We should also acknowledge that the copyright lawsuits filed by authors against AI companies are not motivated merely by hurt feelings; a $1.5 billion settlement is a substantial incentive.

However, as I mentioned, we should look beyond the legal issue here. Not everyone is a book lover, but I believe that a company whose business is knowledge and does not respect books is sending a bad message and undermining its own long-term success.

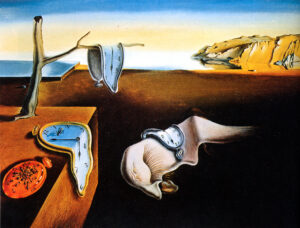

Basing a multi-billion-dollar technology on pirated books downloaded from shady websites is simply not a smart practice for a company at the cutting edge of innovation. Dismantling millions of books for the sake of technological advancement is not merely unwise; it is painfully ironic. The mass destruction of books is an act of extreme oppression and ignorance. Seeing it done as part of the mechanism of technological progress feels morally and culturally backward. The thought of millions of books being systematically cut up and discarded brings to mind book burning, which is associated with some of the worst periods in human history.

To treat a book as nothing more than raw material is to fundamentally misunderstand its iconic nature. When an industry regards the accumulated wisdom of our species as something to be harvested, it reveals a cold, utilitarian view of humanity. When a company ignores the cultural weight of destroying millions of physical books, it exhibits ignorance, crassness, and worse. What does this signal to AI company employees, customers, partners, and investors? Any organization or industry that claims to advance humanity should demonstrate much greater respect for humanity’s culture and its history.

61 | 2 | Published: Feb. 1, 2026 | Updated: Mar. 4, 2026 | Topics: AI, Culture | Follow

continue to this week’s featured note:

-

Unforgettable

AI technology is making the right to be forgotten obsolete, leaving us prisoners of our own pasts.

Leave a Response