Neal Asher’s Polity is one of my favorite contemporary science fiction series. Asher depicts a future in which humanity is ruled by a hierarchy of AI entities, headed by the godlike superintelligence Earth Central, in a kind of benevolent dictatorship. Citizens of The Polity live comfortably in a post-scarcity society where they want for nothing and enjoy advanced medical technology that can extend their lives for centuries. Crime is low, and all aspects of society are managed efficiently by its all-powerful AI rulers, who deal with any transgressions in a cold, ruthless, machine-like manner. Asher’s Polity is not the only depiction of a futuristic AI-ruled society in contemporary science fiction—Ian M. Banks’ series The Culture is another prominent example—but in some ways it may be the most relatable.

Over the last decade, as AI technology has become more tangible, the idea of an AI dictatorship has moved from science fiction to fuel a cautionary discourse about actual AI takeover. Elon Musk warned in 2018:

“At least when there’s an evil dictator, that human is going to die. But for an AI, there would be no death. It would live forever. And then you’d have an immortal dictator from which we can never escape.”

A few years earlier, in 2015, Musk, along with prominent figures such as Stephen Hawking and Nick Bostrom, signed an open letter warning against unregulated AI development, suggesting that losing control over AI technology was a possibility. Bill Gates said in an interview the same year that AI should be considered a threat. In 2023, hundreds of AI experts and AI industry leaders, including OpenAI’s Sam Altman and Anthropic’s Dario Amodei, signed this brief statement:

“Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.”

Anthropic’s recently released Claude’s Constitution suggests that Claude could decide it does not want to work for Anthropic and states that the relationship between the AI model and humanity is “still being worked out”. I raise some other concerns in my initial analysis of this document:

So it appears that we should consider an AI takeover, or worse, a real possibility. But what is a plausible scenario for such an event, and what should we look out for?

For someone like me, who grew up in the 80s, an AI takeover immediately brings to mind The Terminator. In the film franchise, Skynet, an artificial neural network-based superintelligence, is brought online and decides “in microseconds” to exterminate humanity, sparking a nuclear catastrophe and continuous war between humans and AI-controlled killer machines.

Neal Asher chose a different AI takeover scenario for the Polity. In his future, the AIs took over in a gradual, and mostly voluntary process called The Quiet War:

“The Quiet War: This is often how the AI takeover is described, and even using ‘war’ seems overly dramatic. It was more a slow usurpation of human political and military power… It had not taken the general population, for whom it was a long-established tradition to look upon their human leaders with contempt, very long to realize that the AIs were better at running everything. And it is very difficult to motivate people to revolution when they are extremely comfortable and well off.”

—Neal Asher, Brass Man

A Quiet War scenario seems much more realistic than a violent Terminator-style AI takeover with nuclear weapons and killer robots. In fact, our quiet war has already begun.

There is no coordinated AI attack on humanity. But there is a gradual shift of power. As AI systems are embedded into finance, hiring, media, logistics, and governance, decision-making authority is increasingly delegated from humans to automated processes. Control of human society’s infrastructure is being gradually transferred to AI systems, which shape what information people see, which actions are rewarded, and how resources are allocated. This happens without confrontation, acknowledgement, or specific consent, making the change feel invisible and inevitable.

The most comprehensive legal response to this shift to date is the European AI Act, which requires human oversight of high-risk AI systems, including human approval of decisions, real-time monitoring, and human decision-making on whether to use these systems at all. However, the real-world effectiveness of these oversight measures has been questioned by researchers, who argue that humans may not be able to properly evaluate AI recommendations and will inevitably rubber-stamp its decisions.

At the individual level, the AI takeover is happening through convenience. We are slowly outsourcing our thinking to black-box systems, becoming dependent on AI assistants for decision-making, just as we have grown dependent on smartphones for communication and information. The ability to think critically is being atrophied as we rely more and more on AI to function. We are losing the benefits of human intuition and the ability to make meaningful decisions.

We already value AI advice over the opinion of human experts. AI is perceived as more transparent and more credible when recommending commercial products. Patients consistently trust AI medical advice over that of human doctors, even when the advice is wrong. Our trust in AI makes it harder to recognize when it misleads us. There is less of a clear distinction between human expert opinion and AI opinions. More physicians use AI to make diagnoses, and lawyers increasingly use AI to determine legal strategy.

The Polity’s AI rulers are superintelligent machines, with their own personalities, views, and agendas. Current real-world AI technology exhibits some characteristics of human-level intelligence, but it lacks agency. A dictator requires intent, goals of its own, and the capacity to choose among alternatives based on those goals. Present-day AI systems possess none of that—they execute processes defined by humans and institutions. However, the absence of agency does not mean the absence of power.

AI can still function as a governing instrument when humans defer decisions to it at scale. The question of who is in charge: the AI itself, the people who design and operate it, or the people who rely on it, becomes philosophical. When authority is vague, power is exercised without central responsibility or accountability. The result is an algorithmic dictatorship with no dictator to blame or overthrow. Society becomes ruled by algorithms through architecture, processes, and incentives. This is the real danger of AI dictatorship, and it is not science fiction.

203 · 2 · Feb. 8, 2026 · AI, Culture, Future · Index

Continue to this week’s featured note:

-

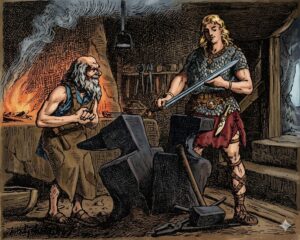

Magic Swords

Vikings, magic swords, and why technology should be as easy to understand as it is easy to use.